|

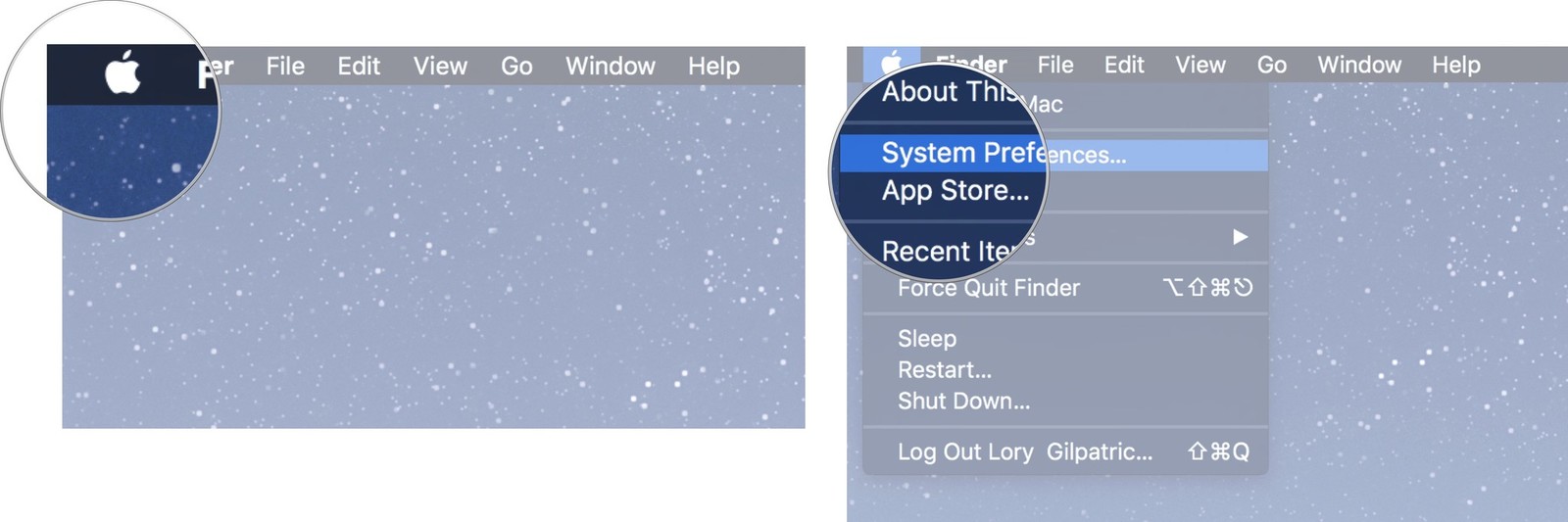

After that, you can use your external hard drive on both Mac and Windows machine. Then, click Apply- Proceed. In the create partition window, choose exFAT under the File System tab.

Unlike Hive Presto doesn't use MapReduce.Partition Usb On For Windows Mac Some Users. Presto is an alternative to tools that query HDFS using pipelines of MapReduce jobs - such as Hive. Before we start, let's take a quick look at its main architecture principles. Unlike Hive Presto doesn't use MapReduce.Partition Usb On For Windows Mac Some Users. Presto is an alternative to tools that query HDFS using pipelines of MapReduce jobs - such as Hive. Before we start, let's take a quick look at its main architecture principles.

This offering is maintained by Starburst, the leading contributors to Presto.with partners Technical University of Berlin, Bavarian Centre for Applied Energy. Architected for separation of storage and compute, Presto is cloud native and can query data in Azure data storages, Hadoop, SQL and NoSQL databases, and other data sources. Presto is a fast and scalable open source SQL engine. Unfortunately, Mac does not have. Microsoft users can create a bootable USB on Windows using a very simple and helpful tool. Whether you want to take a screen shot or just wanting to add a hashtag, Mac users have it the hard way. Partition For Windows University Of Phoenix Mac OS X OrMac OS X or Linux Java 8 Update 151 or higher (8u151+), 64-bit. See the User Manual for deployment instructions and end user documentation. Presto is a distributed SQL query engine for big data. So, it becomes inefficient to run MapReduce jobs over a large table. When we submit a SQL query, Hive read the entire data-set. Apache Hive converts the SQL queries into MapReduce jobs and then submits it to the Hadoop cluster. # Distributed SQL Engine / Thrift Server. ]] name=Presto SQL interface=presto # Specific options for connecting to the Presto server. It is able to read data from the same schemas and tables using the same data formats — ORC, Avro. Because of this connectivity, Presto is a drop-in replacement for organizations using Hive today. May I know the partitions of the hive tables before presto has executed the sql? I used pyhive to connect hive to use Presto. Apache Hive is a data warehousing tool designed to easily output analytics results to Hadoop. Apache Hive and Presto are both analytics engines that businesses can use to generate insights and enable data analytics. 关于代码重构的灵魂三问:是什么?为什么?怎么做?> 现在我们的产品,通过presto 查询hive ElasticSearch mysql里面的数据,并且这些不同存储的会通过presto进行关联查询, 但是我们的业务比较复杂,sql比较长 是直接写在java里面的 这样维护难度很大 有没有类似于mybatis的框架,把表结构给结构化,并把sql提取. Setting connector.name=hive-alluxio sets the connector type to the name of the new Alluxio connector for Presto, which is hive-alluxio. Repeated queries with different parameters, or even different queries from different users, often access, and therefore transfer, the same objects. The objects are retrieved from HDFS, or any other supported object storage, by multiple workers and processed on these workers. It often involves the transfer of large amounts of data. Querying object storage with the Hive Connector is a very common use case for Presto. Heron d130 barcode scanner manualPresto’s distributed query engine is optimized for interactive analysis and supports standard ANSI SQL, including complex queries, aggregations, joins, and window functions. Presto is a distributed system that runs on a cluster of nodes. Presto is an open-source distributed SQL query engine that is designed to run SQL queries even of petabytes size.Presto − Workflow. Many Hadoop users get confused when it comes to the selection of these for managing database. Impala is developed and shipped by Cloudera. I only took a quick look at the paper they have but I didn't see what format the data is when they're querying with Presto or Hive? Did a quick search for "Parquet" or "ORC" mentioned in the paper but didn't see anything. Presto was designed and written from the ground up for interactive analytics and approaches the speed of commercial data warehouses while scaling to the size of organizations like Facebook. In fact, the genesis of Presto came about due to these slow Hive query conditions at Facebook back in 2012.Presto is an open source distributed SQL query engine for running interactive analytic queries against data sources of all sizes ranging from gigabytes to petabytes. Typically, you seek out the use of Presto when you experience an intensely slow query turnaround from your existing Hadoop, Spark, or Hive infrastructure. One of the most confusing aspects when starting Presto is the Hive connector. MapReduce is fault-tolerant since it stores the intermediate results into disks and enables batch-style data processing. Hive translates SQL queries into multiple stages of MapReduce and it is powerful enough to handle huge numbers of jobs (Although as Arun C Murthy pointed out, modern Hive runs on Tez whose computational model is similar to Spark’s). This post looks at two popular engines, Hive and Presto, and assesses the best uses for each. In fact, there are currently 24 different Presto data source connectors.

0 Comments

Leave a Reply. |

AuthorTracy ArchivesCategories |

RSS Feed

RSS Feed